AI application

AI application: product structure changed in 2026

AI application development — LLMs like Claude, GPT, Gemini have become product foundations. RAG, AI agents, fine-tuning, vector search.

Production-grade AI applications: rate limit management, fallback strategy, cost optimization, prompt engineering, evaluation — all part of the standard package.

Standard for AI application delivery

- Claude / GPT / Gemini API integration

- RAG (vector database + retrieval)

- AI agent (multi-step, tool use)

- Prompt engineering + evaluation

- Cost monitoring + rate limit management

Core patterns of AI applications

Modern AI applications run on 4 main patterns: 1) Single-shot LLM call (general chatbot, content generation), 2) RAG (linking user data to LLM — knowledge base, document QA), 3) Multi-step agent (tool use, multi-turn conversation), 4) Fine-tuned model (company-specific language, tone).

We pick by product need. RAG is enough for most enterprise use; agent is added when needed; fine-tuning is last resort.

Vector database and RAG architecture

RAG is the standard way an AI application works with company-specific data. Postgres (pgvector), Pinecone, Weaviate, or Qdrant — vector database choice depends on project size.

Embedding model choice (OpenAI text-embedding-3, Cohere, Anthropic) is a separate decision; embedding quality directly affects RAG output. Hybrid search (vector + keyword) is our standard.

Cost and rate-limit management

Two things to watch when an AI application hits production: cost and rate limit. Most LLM APIs are pricey; cost monitoring is mandatory day one. We set up alarm systems on Anthropic / OpenAI dashboards.

Rate-limit management: queueing, exponential backoff, fallback model (GPT-3.5 instead of GPT-4), prompt caching (Anthropic offers it), batch API. These can cut cost by 50–80%.

Evaluation and prompt engineering

AI application quality comes from prompt engineering and evaluation. Writing good prompts — context, examples, structured output — is half the quality. The other half is evaluation: which prompt version answered better?

We set up A/B test frameworks and eval suites; have humans annotate during the process. We iteratively improve prompts.

Frequently asked questions

Which LLM do you use?

Anthropic Claude (often preferred), OpenAI GPT, Google Gemini, Mistral. We pick based on use case and cost.

Is company data safe?

Yes. Anthropic / OpenAI Enterprise plans guarantee data privacy (not used for training). For on-premise deployment we can run open models (Llama / Mistral) locally.

How is AI application cost calculated?

Two parts: development cost (fixed scope) and runtime LLM API cost (usage-based). We estimate runtime cost during discovery based on usage volume.

Is fine-tuning necessary?

Most often, no. RAG + good prompt engineering is enough. Fine-tuning is last resort when special tone, format, or domain language is required.

Which sectors have you applied AI in?

Customer support automation, content generation, document QA, code analysis, sales process automation, manufacturing-metric interpretation. Sector-agnostic.

Locations

Locations where we ship AI application projects

We deliver AI application development across global hubs.

Selected projects

FitTrack Mobile App

Personal fitness tracking and workout planning app. 50,000+ active users on iOS and Android platforms.

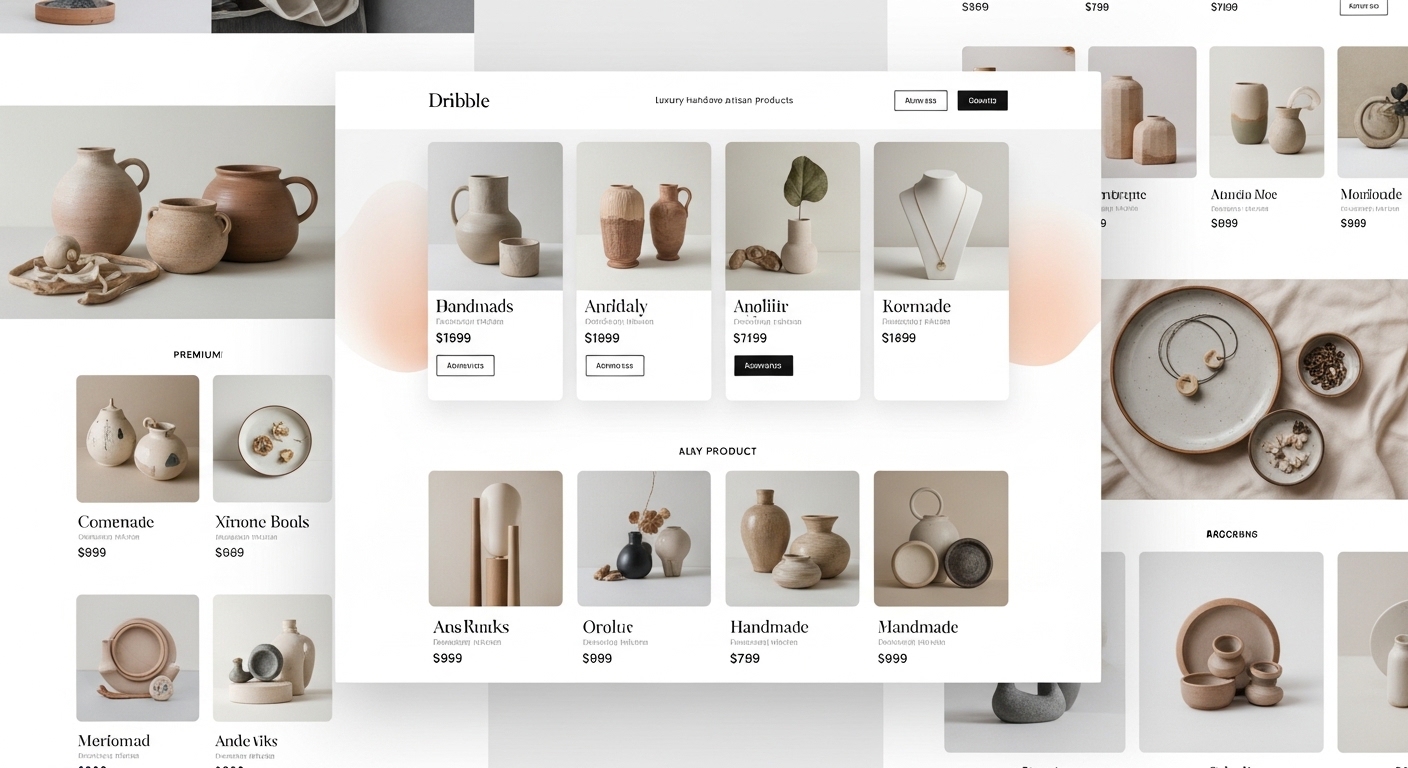

ShopZone E-Commerce Platform

Multi-vendor e-commerce platform. Integrated payment system, inventory management, and analytics dashboard.

Nova Corporate Website

Modern corporate website for Nova, an energy sector company.

Related guides

Articles to read before deciding on AI

Guides on AI application and LLMs.

Software Development

Software Development Methods 2026: Modern Stack and Process

Modern software development methods — TypeScript, test-first, CI/CD, modular monolith, RSC. What to use in 2026?

7 min

Enterprise

ERP Software Selection Criteria: Off-the-Shelf or Custom?

A guide for enterprise buyers choosing between off-the-shelf ERP (SAP, Logo, Mikro) and custom ERP.

6 min

AI

Customer Support Automation with AI Chatbot

How LLM-based AI chatbots transform customer support — examined in case-study format.

6 min

Start an AI application project

After a 30-minute discovery call we share a written AI application roadmap.